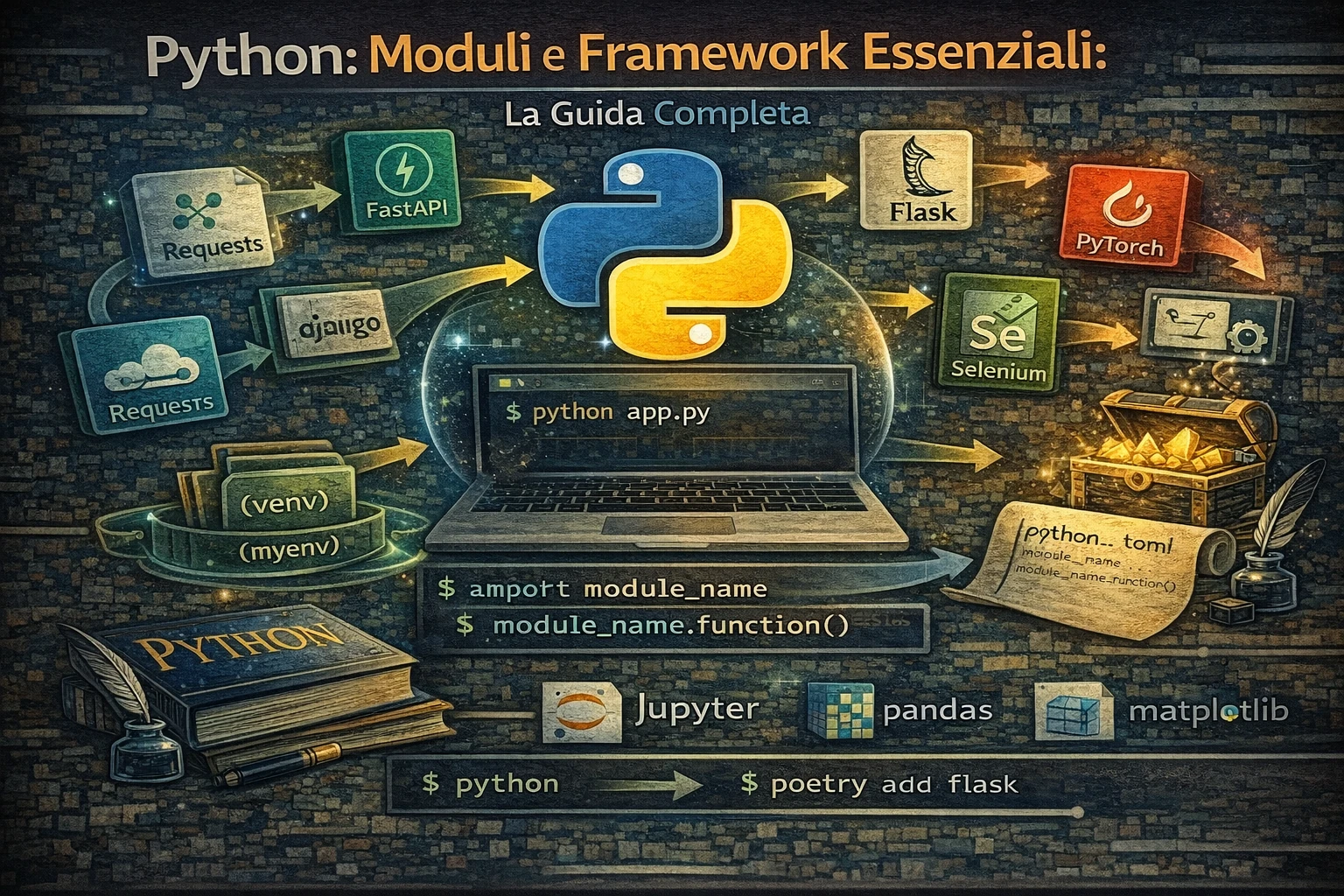

Python: Moduli e Framework Essenziali

Librerie e framework Python indispensabili per ogni sviluppatore

Python: moduli e framework essenziali

Python deve gran parte della sua popolarita al ricchissimo ecosistema di librerie e framework disponibili. Questa guida ti presenta i moduli piu utili e i framework indispensabili per ogni area di sviluppo, dalla programmazione web all’apprendimento automatico, dall’automazione all’analisi dati.

- Librerie standard: moduli built-in piu potenti di Python

- Framework web: Django, Flask, FastAPI per sviluppo web moderno

- Scienza dei dati: NumPy, Pandas, Matplotlib per analisi dati

- Apprendimento automatico: Scikit-learn, TensorFlow, PyTorch

- Automazione: Selenium, Beautiful Soup, Requests per web scraping

- Strumenti di sviluppo: testing, debugging, packaging, distribuzione

- Esempi pratici per ogni libreria con codice funzionante

Indice della guida

Librerie standard Python

- Moduli built-in essenziali

- Gestione file e directory

- Networking e web

- Data e ora

- Logging e debugging

Sviluppo web

- Framework web full-stack

- Django - il framework web completo

- Flask - micro-framework flessibile

- FastAPI - framework moderno per API

- Sviluppo API

- Motori template

Scienza dei dati e analisi

Apprendimento automatico e IA

- Librerie di apprendimento automatico

- Scikit-learn - ML Completo

- TensorFlow/Keras - Apprendimento profondo

- Computer vision

- Elaborazione del linguaggio naturale

Automazione e scripting

- Web scraping e automazione

- Beautiful Soup - parsing HTML

- Selenium - automazione browser

- Sviluppo GUI

- Automazione task

Strumenti di sviluppo

Librerie standard Python

Moduli built-in essenziali

collections - strutture dati specializzate

|

|

itertools - iteratori avanzati

|

|

functools - programmazione funzionale

|

|

Gestione file e directory

pathlib - gestione percorsi moderna

|

|

shutil - operazioni file avanzate

|

|

Networking e web

urllib - client HTTP built-in

|

|

email - gestione email

|

|

Data e ora

datetime - gestione date e orari

|

|

Logging e debugging

logging - sistema di logging professionale

|

|

Framework web full-stack

Django - il framework web completo

|

|

Caratteristiche Django:

- ORM potente con migrazioni automatiche

- Admin interface auto-generata

- Sistema di autenticazione completo

- Template engine con ereditarietà

- Middleware per funzionalità trasversali

- Sicurezza built-in (CSRF, XSS protection)

Flask - micro-framework flessibile

|

|

FastAPI - framework moderno per API

|

|

Vantaggi FastAPI:

- Validazione automatica con Pydantic

- Documentazione OpenAPI auto-generata

- Performance elevate con supporto asincrono

- Type hints nativi per better IDE support

- Dependency injection system avanzato

Sviluppo API

Lo sviluppo API richiede attenzione a contratto, versioni, autenticazione e prestazioni. In Python, oltre a FastAPI, sono comuni:

- Django REST Framework per progetti Django

- Flask-RESTX per REST su Flask

- Pydantic o Marshmallow per validazione payload

Esempio minimale con FastAPI:

|

|

Motori template

I motori template servono per generare HTML o documenti con dati dinamici. I piu usati:

- Jinja2 (generico e molto diffuso)

- Django Templates (integrato in Django)

- Mako (alternativa leggera)

Esempio semplice con Jinja2:

|

|

Scienza dei dati e analisi

Manipolazione dati

Pandas - analisi dati strutturati

|

|

NumPy - calcolo scientifico

|

|

Visualizzazione

Matplotlib - plotting completo

|

|

Plotly - visualizzazioni interattive

|

|

Analisi statistica

Per analisi statistiche avanzate, usa:

- SciPy per test statistici e distribuzioni

- statsmodels per modelli statistici e regressioni

Esempio con SciPy:

|

|

Database e ORM

Per lavorare con database relazionali in modo pulito:

- SQLAlchemy (ORM piu diffuso)

- SQLModel (tipi e Pydantic)

- Peewee (leggero)

Esempio minimale con SQLAlchemy:

|

|

Apprendimento automatico e IA

Librerie di apprendimento automatico

Scikit-learn - ML completo

|

|

TensorFlow/Keras - apprendimento profondo

|

|

Computer vision

Per elaborare immagini e video:

- OpenCV per computer vision classica

- Pillow per manipolazione immagini

- scikit-image per analisi scientifica

Esempio con OpenCV:

|

|

Elaborazione del linguaggio naturale

Per testi e NLP:

- spaCy per analisi linguistica

- NLTK per preprocessing

- transformers per modelli moderni

Esempio con spaCy:

|

|

Web scraping e automazione

Beautiful Soup - parsing HTML

|

|

Selenium - automazione browser

|

|

Sviluppo GUI

Per creare interfacce grafiche:

- Tkinter (incluso in Python)

- PyQt / PySide (interfacce avanzate)

- Flet (UI moderne con Flutter)

Esempio minimo con Tkinter:

|

|

Automazione task

Per schedulare e orchestrare job:

- schedule per cron semplici

- APScheduler per job avanzati

- Celery per task distribuiti

Esempio con schedule:

|

|

Strumenti di sviluppo e testing

Framework di testing

Pytest - testing avanzato

|

|

Packaging e distribuzione

Per distribuire librerie o applicazioni:

- pyproject.toml come standard moderno

- build per creare pacchetti

- twine per pubblicare su PyPI

Esempio di build:

|

|

Pubblicazione:

|

|

Prestazioni e profiling

cProfile e line_profiler

|

|

Conclusioni e prossimi passi

Roadmap di approfondimento

Per sviluppo web

- Django avanzato: Django REST Framework, Celery, deployment

- FastAPI profondo: Dependency injection, middlewares, testing

- Frontend integration: React/Vue.js con API Python

- Deployment: Docker, Kubernetes, cloud platforms

Per scienza dei dati

- Analisi avanzate: Jupyter notebooks, statistical modeling

- Big Data: Dask, PySpark per dataset enormi

- Visualization: Dash, Streamlit per web apps interattive

- Database: SQLAlchemy, database optimization

Per apprendimento automatico

- Apprendimento profondo: PyTorch, TensorFlow avanzati

- MLOps: MLflow, Kubeflow, model deployment

- Computer Vision: OpenCV, PIL, image processing

- NLP: spaCy, NLTK, transformer models

Risorse per continuare

- Python.org: Documentazione ufficiale e tutorial

- Real Python: Tutorial approfonditi e best practices

- Awesome Python: Lista curata di librerie Python

- PyPI: Repository ufficiale dei package Python

- Python Package Index: Cerca e installa nuove librerie

Comunita e supporto

- Stack Overflow: Tag Python per domande specifiche

- Reddit r/Python: Discussioni e notizie sulla community

- Python Discord: Chat in tempo reale con altri sviluppatori

- Local Python User Groups: Meetup nella tua città